Capelli's identity

In mathematics, Capelli's identity, named after Alfredo Capelli (1887), is an analogue of the formula det(AB) = det(A) det(B), for certain matrices with noncommuting entries, related to the representation theory of the Lie algebra  . It can be used to relate an invariant ƒ to the invariant Ωƒ, where Ω is Cayley's Ω process.

. It can be used to relate an invariant ƒ to the invariant Ωƒ, where Ω is Cayley's Ω process.

Statement

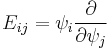

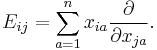

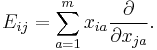

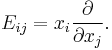

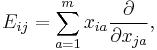

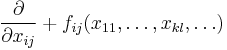

Suppose that xij for i,j = 1,...,n are commuting variables. Write Eij for the polarization operator

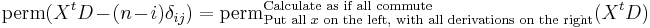

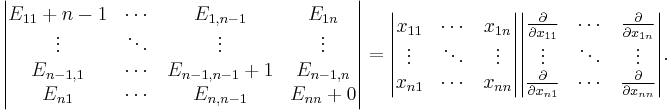

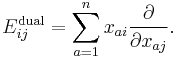

The Capelli identity states that the following differential operators, expressed as determinants, are equal:

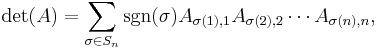

Both sides are differential operators. The determinant on the left has non-commuting entries, and is expanded with all terms preserving their "left to right" order. Such a determinant is often called a column-determinant, since it can be obtained by the column expansion of the determinant starting from the first column. It can be formally written as

where in the product first come the elements from the first column, then from the second and so on. The determinant on the far right is Cayley's omega process, and the one on the left is the Capelli determinant.

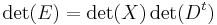

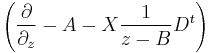

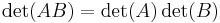

The operators Eij can be written in a matrix form:

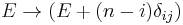

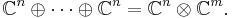

where  are matrices with elements Eij, xij,

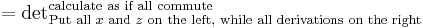

are matrices with elements Eij, xij,  respectively. If all elements in these matrices would be commutative then clearly

respectively. If all elements in these matrices would be commutative then clearly  . The Capelli identity shows that despite noncommutativity there exists a "quantization" of the formula above. The only price for the noncommutivity is a small correction:

. The Capelli identity shows that despite noncommutativity there exists a "quantization" of the formula above. The only price for the noncommutivity is a small correction:  on the left hand side. For generic noncommutative matrices formulas like

on the left hand side. For generic noncommutative matrices formulas like

do not exist, and the notion of the 'determinant' itself does not make sense for generic noncommutative matrices. That is why the Capelli identity still holds some mystery, despite many proofs offered for it. A very short proof does not seem to exist. Direct verification of the statement can be given as an exercise for n' = 2, but is already long for n = 3.

Relations with representation theory

Consider the following slightly more general context. Suppose that n and m are two integers and xij for i = 1,...,n,j = 1,...,m, be commuting variables. Redefine Eij by almost the same formula:

with the only difference that summation index a ranges from 1 to m. One can easily see that such operators satisfy the commutation relations:

Here ![[a,b]](/2012-wikipedia_en_all_nopic_01_2012/I/2c3d331bc98b44e71cb2aae9edadca7e.png) denotes the commutator

denotes the commutator  . These are the same commutation relations which are satisfied by the matrices

. These are the same commutation relations which are satisfied by the matrices  which have zeros everywhere except the position (i,j), where 1 stands. (

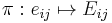

which have zeros everywhere except the position (i,j), where 1 stands. ( are sometimes called matrix units). Hence we conclude that the correspondence

are sometimes called matrix units). Hence we conclude that the correspondence  defines a representation of the Lie algebra

defines a representation of the Lie algebra  in the vector space of polynomials of xij.

in the vector space of polynomials of xij.

Case m = 1 and representation Sk Cn

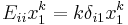

It is especially instructive to consider the special case m = 1; in this case we have xi1, which is abbreviated as xi:

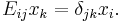

In particular, for the polynomials of the first degree it is seen that:

Hence the action of  restricted to the space of first-order polynomials is exactly the same as the action of matrix units

restricted to the space of first-order polynomials is exactly the same as the action of matrix units  on vectors in

on vectors in  . So, from the representation theory point of view, the subspace of polynomials of first degree is a subrepresentation of the Lie algebra

. So, from the representation theory point of view, the subspace of polynomials of first degree is a subrepresentation of the Lie algebra  , which we identified with the standard representation in

, which we identified with the standard representation in  . Going further, it is seen that the differential operators

. Going further, it is seen that the differential operators  preserve the degree of the polynomials, and hence the polynomials of each fixed degree form a subrepresentation of the Lie algebra

preserve the degree of the polynomials, and hence the polynomials of each fixed degree form a subrepresentation of the Lie algebra  . One can see further that the space of homogeneous polynomials of degree k can be identified with the symmetric tensor power

. One can see further that the space of homogeneous polynomials of degree k can be identified with the symmetric tensor power  of the standard representation

of the standard representation  .

.

One can also easily identify the highest weight structure of these representations. The monomial  is a highest weight vector, indeed:

is a highest weight vector, indeed:  for i < j. Its highest weight equals to (k, 0, ... ,0), indeed:

for i < j. Its highest weight equals to (k, 0, ... ,0), indeed:  .

.

Such representation is sometimes called bosonic representation of  . Similar formulas

. Similar formulas  define the so-called fermionic representation, here

define the so-called fermionic representation, here  are anti-commuting variables. Again polynomials of k-th degree form an irreducible subrepresentation which is isomorphic to

are anti-commuting variables. Again polynomials of k-th degree form an irreducible subrepresentation which is isomorphic to  i.e. anti-symmetric tensor power of

i.e. anti-symmetric tensor power of  . Highest weight of such representation is (0, ..., 0, 1, 0, ..., 0). These representations for k = 1, ..., n are fundamental representations of

. Highest weight of such representation is (0, ..., 0, 1, 0, ..., 0). These representations for k = 1, ..., n are fundamental representations of  .

.

Capelli identity for m = 1

Let us return to the Capelli identity. One can prove the following:

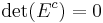

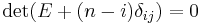

the motivation for this equality is the following: consider  for some commuting variables

for some commuting variables  . The matrix

. The matrix  is of rank one and hence its determinant is equal to zero. Elements of matrix

is of rank one and hence its determinant is equal to zero. Elements of matrix  are defined by the similar formulas, however, its elements do not commute. The Capelli identity shows that the commutative identity:

are defined by the similar formulas, however, its elements do not commute. The Capelli identity shows that the commutative identity:  can be preserved for the small price of correcting matrix

can be preserved for the small price of correcting matrix  by

by  .

.

Let us also mention that similar identity can be given for the characteristic polynomial:

where ![t^{[k]}=t(t%2B1) \cdots (t%2Bk-1)](/2012-wikipedia_en_all_nopic_01_2012/I/6a65b5f1f565d896013ae6e5c35f6324.png) . The commutative counterpart of this is a simple fact that for rank = 1 matrices the characteristic polynomial contains only the first and the second coefficients.

. The commutative counterpart of this is a simple fact that for rank = 1 matrices the characteristic polynomial contains only the first and the second coefficients.

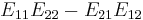

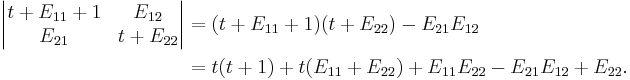

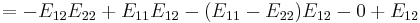

Let us consider an example for n = 2.

Using

we see that this is equal to:

The universal enveloping algebra  and its center

and its center

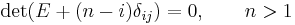

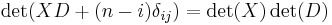

An interesting property of the Capelli determinant is that it commutes with all operators Eij, that is the commutator ![[ E_{ij}, \det(E%2B(n-i)\delta_{ij})]=0](/2012-wikipedia_en_all_nopic_01_2012/I/ff8d3caec0ab1466eaddeb36b3e7ad90.png) is equal to zero. It can be generalized:

is equal to zero. It can be generalized:

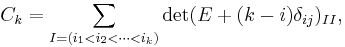

Consider any elements Eij in any ring, such that they satisfy the commutation relation ![[ E_{ij}, E_{kl}] = \delta_{jk}E_{il}- \delta_{il}E_{kj}](/2012-wikipedia_en_all_nopic_01_2012/I/479bf96a1d6f446415bc2bb86f0ad924.png) , (so they can be differential operators above, matrix units eij or any other elements) define elements Ck as follows:

, (so they can be differential operators above, matrix units eij or any other elements) define elements Ck as follows:

where ![t^{[k]}=t(t%2B1)\cdots(t%2Bk-1),](/2012-wikipedia_en_all_nopic_01_2012/I/bee23cf270f4df9861dc4aaa94401ce8.png)

then:

- elements Ck commute with all elements Eij

- elements Ck can be given by the formulas similar to the commutative case:

i.e. they are sums of principal minors of the matrix E, modulo the Capelli correction  . In particular element C0 is the Capelli determinant considered above.

. In particular element C0 is the Capelli determinant considered above.

These statements are interrelated with the Capelli identity, as will be discussed below, and similarly to it the direct few lines short proof does not seem to exist, despite the simplicity of the formulation.

The universal enveloping algebra

can defined as an algebra generated by

- Eij

subject to the relations

alone. The proposition above shows that elements Ckbelong to the center of  . It can be shown that they actually are free generators of the center of

. It can be shown that they actually are free generators of the center of  . They are sometimes called Capelli generators. The Capelli identities for them will be discussed below.

. They are sometimes called Capelli generators. The Capelli identities for them will be discussed below.

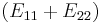

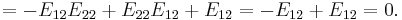

Consider an example for n = 2.

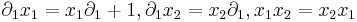

It is immediate to check that element  commute with

commute with  . (It corresponds to an obvious fact that the identity matrix commute with all other matrices). More instructive is to check commutativity of the second element with

. (It corresponds to an obvious fact that the identity matrix commute with all other matrices). More instructive is to check commutativity of the second element with  . Let us do it for

. Let us do it for  :

:

We see that the naive determinant  will not commute with

will not commute with  and the Capelli's correction

and the Capelli's correction  is essential to ensure the centrality.

is essential to ensure the centrality.

General m and dual pairs

Let us return to the general case:

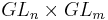

for arbitrary n and m. Definition of operators Eij can be written in a matrix form:  , where

, where  is

is  matrix with elements

matrix with elements  ;

;  is

is  matrix with elements

matrix with elements  ;

;  is

is  matrix with elements

matrix with elements  .

.

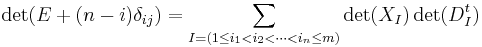

Capelli–Cauchy–Binet identities

For general m matrix E is given as product of the two rectangular matrices: X and transpose to D. If all elements of these matrices would commute then one knows that the determinant of E can be expressed by the so-called Cauchy–Binet formula via minors of X and D. An analogue of this formula also exists for matrix E again for the same mild price of the correction  :

:

,

,

In particular (similar to the commutative case): if m<n, then  ; if m=n we return to the identity above.

; if m=n we return to the identity above.

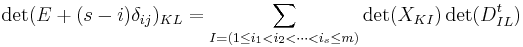

Let us also mention that similar to the commutative case (see Cauchy–Binet for minors), one can express not only the determinant of E, but also its minors via minors of X and D:

,

,

Here K = (k1 < k2 < ... < ks), L = (l1 < l2 < ... < ls), are arbitrary multi-indexes; as usually  denotes a submatrix of M formed by the elements M kalb. Pay attention that the Capelli correction now contains s, not n as in previous formula. Note that for s=1, the correction (s − i) disappears and we get just the definition of E as a product of X and transpose to D. Let us also mention that for generic K,L corresponding minors do not commute with all elements Eij, so the Capelli identity exists not only for central elements.

denotes a submatrix of M formed by the elements M kalb. Pay attention that the Capelli correction now contains s, not n as in previous formula. Note that for s=1, the correction (s − i) disappears and we get just the definition of E as a product of X and transpose to D. Let us also mention that for generic K,L corresponding minors do not commute with all elements Eij, so the Capelli identity exists not only for central elements.

As a corollary of this formula and the one for the characteristic polynomial in the previous section let us mention the following:

where

. This formula is similar to the commutative case, modula

. This formula is similar to the commutative case, modula  at the left hand side and t[n] instead of tn at the right hand side.

at the left hand side and t[n] instead of tn at the right hand side.

Relation to dual pairs

Modern interest in these identities has been much stimulated by Roger Howe who considered them in his theory of reductive dual pairs (also known as Howe duality). To make the first contact with these ideas, let us look more precisely on operators  . Such operators preserve the degree of polynomials. Let us look at the polynomials of degree 1:

. Such operators preserve the degree of polynomials. Let us look at the polynomials of degree 1:  , we see that index l is preserved. One can see that from the representation theory point of view polynomials of the first degree can be identified with direct sum of the representations

, we see that index l is preserved. One can see that from the representation theory point of view polynomials of the first degree can be identified with direct sum of the representations  , here l-th subspace (l=1...m) is spanned by

, here l-th subspace (l=1...m) is spanned by  , i = 1, ..., n. Let us give another look on this vector space:

, i = 1, ..., n. Let us give another look on this vector space:

Such point of view gives the first hint of symmetry between m and n. To deepen this idea let us consider:

These operators are given by the same formulas as  modula renumeration

modula renumeration  , hence by the same arguments we can deduce that

, hence by the same arguments we can deduce that  form a representation of the Lie algebra

form a representation of the Lie algebra  in the vector space of polynomials of xij. Before going further we can mention the following property: differential operators

in the vector space of polynomials of xij. Before going further we can mention the following property: differential operators  commute with differential operators

commute with differential operators  .

.

The Lie group  acts on the vector space

acts on the vector space  in a natural way. One can show that the corresponding action of Lie algebra

in a natural way. One can show that the corresponding action of Lie algebra  is given by the differential operators

is given by the differential operators  and

and  respectively. This explains the commutativity of these operators.

respectively. This explains the commutativity of these operators.

The following deeper properties actually hold true:

- The only differential operators which commute with

are polynomials in

are polynomials in  , and vice versa.

, and vice versa.

- Decomposition of the vector space of polynomials into a direct sum of tensor products of irreducible representations of

and

and  can be given as follows:

can be given as follows:

The summands are indexed by the Young diagrams D, and representations  are mutually non-isomorphic. And diagram

are mutually non-isomorphic. And diagram  determine

determine  and vice versa.

and vice versa.

- In particular the representation of the big group

is multiplicity free, that is each irreducible representation occurs only one time.

is multiplicity free, that is each irreducible representation occurs only one time.

One easily observe the strong similarity to Schur–Weyl duality.

Generalizations

Much work have been done on the identity and its generalizations. Approximately two dozens of mathematicians and physicists contributed to the subject, to name a few: R. Howe, B. Kostant[1][2] Fields medalist A. Okounkov[3][4] A. Sokal,[5] D. Zeilberger.[6]

It seems historically the first generalizations were obtained by Herbert Westren Turnbull in 1948,[7] who found the generalization for the case of symmetric matrices (see[5][6] for modern treatments).

The other generalizations can be divided into several patterns. Most of them are based on the Lie algebra point of view. Such generalizations consist of changing Lie algebra  to simple Lie algebras [8] and their super[9][10] (q),[11][12] and current versions.[13] As well as identity can be generalized for different reductive dual pairs.[14][15] And finally one can consider not only the determinant of the matrix E, but its permanent,[16] trace of its powers and immanants.[3][4][17][18] Let us mention few more papers;[19][20][21] still the list of references is incomplete. It has been believed for quite a long time that the identity is intimately related with semi-simple Lie algebras. Surprisingly a new purely algebraic generalization of the identity have been found in 2008[5] by S. Caracciolo, A. Sportiello, A. D. Sokal which has nothing to do with any Lie algebras.

to simple Lie algebras [8] and their super[9][10] (q),[11][12] and current versions.[13] As well as identity can be generalized for different reductive dual pairs.[14][15] And finally one can consider not only the determinant of the matrix E, but its permanent,[16] trace of its powers and immanants.[3][4][17][18] Let us mention few more papers;[19][20][21] still the list of references is incomplete. It has been believed for quite a long time that the identity is intimately related with semi-simple Lie algebras. Surprisingly a new purely algebraic generalization of the identity have been found in 2008[5] by S. Caracciolo, A. Sportiello, A. D. Sokal which has nothing to do with any Lie algebras.

Turnbull's identity for symmetric matrices

Consider symmetric matrices

Herbert Westren Turnbull[7] in 1948 discovered the following identity:

Combinatorial proof can be found in the paper,[6] another proof and amusing generalizations in the paper,[5] see also discussion below.

The Howe–Umeda–Kostant–Sahi identity for antisymmetric matrices

Consider antisymmetric matrices

Then

The Caracciolo–Sportiello–Sokal identity for Manin matrices

Consider two matrices M and Y over some associative ring which satisfy the following condition

for some elements Qil. Or ”in words”: elements in j-th column of M commute with elements in k-th row of Y unless j = k, and in this case commutator of the elements Mik and Ykl depends only on i, l, but does not depend on k.

Assume that M is a Manin matrix (the simplest example is the matrix with commuting elements).

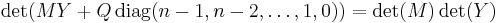

Then for the square matrix case

Here Q is a matrix with elements Qil, and diag(n − 1, n − 2, ..., 1, 0) means the diagonal matrix with the elements n − 1, n − 2, ..., 1, 0 on the diagonal.

See [5] proposition 1.2' formula (1.15) page 4, our Y is transpose to their B.

Obviously the original Cappeli's identity the particular case of this identity. Moreover from this identity one can see that in the original Capelli's identity one can consider elements

for arbitrary functions fij and the identity still will be true.

The Mukhin–Tarasov–Varchenko identity and the Gaudin model

Statement

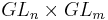

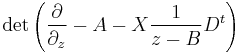

Consider matrices X and D as in Capelli's identity, i.e. with elements  and

and  at position (ij).

at position (ij).

Let z be another formal variable (commuting with x). Let A and B be some matrices which elements are complex numbers.

Here the first determinant is understood (as always) as column-determinant of a matrix with non-commutative entries. The determinant on the right is calculated as if all the elements commute, and putting all x and z on the left, while derivations on the right. (Such recipe is called a Wick ordering in the quantum mechanics).

The Gaudin quantum integrable system and Talalaev's theorem

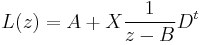

The matrix

is a Lax matrix for the Gaudin quantum integrable spin chain system. D. Talalaev solved the long-standing problem of the explicit solution for the full set of the quantum commuting conservation laws for the Gaudin model, discovering the following theorem.

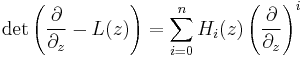

Consider

Then for all i,j,z,w

i.e. Hi(z) are generating functions in z for the differential operators in x which all commute. So they provide quantum commuting conservation laws for the Gaudin model.

Permanents, immanants, traces – "higher Capelli identities"

The original Capelli identity is a statement about determinants. Later, analogous identities were found for permanents, immanants and traces.

Turnbull's identity for permanents of antisymmetric matrices

Consider the antisymmetric matrices X and D with elements xij and corresponding derivations, as in the case of the HUKS identity above.

Then

Let us cite [6]: "...is stated without proof at the end of Turnbull’s paper". The authors themselves follow Turnbull – at the very end of their paper they write:

"Since the proof of this last identity is very similar to the proof of Turnbull’s symmetric analog (with a slight twist), we leave it as an instructive and pleasant exercise for the reader.".

References

Inline

- ^ Kostant, B.; Sahi, S. (1991), "The Capelli Identity, tube domains, and the generalized Laplace transform", Advances in Math. 87: 71–92, doi:10.1016/0001-8708(91)90062-C

- ^ Kostant, B.; Sahi, S. (1993), "Jordan algebras and Capelli identities", Inventiones Mathematicae 112 (1): 71–92, doi:10.1007/BF01232451

- ^ a b Okounkov, A. (1996), Quantum Immanants and Higher Capelli Identities, arXiv:q-alg/9602028

- ^ a b Okounkov, A. (1996), Young Basis, Wick Formula, and Higher Capelli Identities, arXiv:q-alg/9602027

- ^ a b c d e Caracciolo, S.; Sportiello, A.; Sokal, A. (2008), Noncommutative determinants, Cauchy–Binet formulae, and Capelli-type identities. I. Generalizations of the Capelli and Turnbull identities, arXiv:0809.3516

- ^ a b c d Foata, D.; Zeilberger, D. (1993), Combinatorial Proofs of Capelli's and Turnbull's Identities from Classical Invariant Theory, arXiv:math/9309212

- ^ a b Turnbull, Herbert Westren (1948), "Symmetric determinants and the Cayley and Capelli operators", Proc. Edinburgh Math. Soc. 8 (2): 76–86, doi:10.1017/S0013091500024822

- ^ Molev, A.; Nazarov, M. (1997), Capelli Identities for Classical Lie Algebras, arXiv:q-alg/9712021

- ^ Molev, A. (1996), Factorial supersymmetric Schur functions and super Capelli identities, arXiv:q-alg/9606008

- ^ Nazarov, M. (1996), Capelli identities for Lie superalgebras, arXiv:q-alg/9610032

- ^ Noumi, M.; Umeda, T.; Wakayma, M. (1994), "A quantum analogue of the Capelli identity and an elementary differential calculus on GLq(n)", Duke Mathematical Journal 76 (2): 567–594, doi:10.1215/S0012-7094-94-07620-5, http://projecteuclid.org/euclid.dmj/1077286975

- ^ Noumi, M.; Umeda, T.; Wakayma, M. (1996), "Dual pairs, spherical harmonics and a Capelli identity in quantum group theory", Compositio Mathematica 104 (2): 227–277, http://www.numdam.org/item?id=CM_1996__104_3_227_0

- ^ Mukhin, E.; Tarasov, V.; Varchenko, A. (2006), A generalization of the Capelli identity, arXiv:math.QA/0610799

- ^ Itoh, M. (2004), "Capelli identities for reductive dual pairs", Advances in Mathematics 194 (2): 345–397, doi:10.1016/j.aim.2004.06.010

- ^ Itoh, M. (2005), "Capelli Identities for the dual pair ( O M, Sp N)", Mathematische Zeitschrift 246 (1–2): 125–154, doi:10.1007/s00209-003-0591-2

- ^ Nazarov, M. (1991), "Quantum Berezinian and the classical Capelli identity", Letters in Mathematical Physics 21 (2): 123–131, doi:10.1007/BF00401646

- ^ Nazarov, M. (1996), Yangians and Capelli identities, arXiv:q-alg/9601027

- ^ Molev, A. (1996), A Remark on the Higher Capelli Identities, arXiv:q-alg/9603007

- ^ Kinoshita, K.; Wakayama, M. (2002), "Explicit Capelli identities for skew symmetric matrices", Proceedings of the Edinburgh Mathematical Society 45 (2): 449–465, doi:10.1017/S0013091500001176

- ^ Hashimoto, T. (2008), Generating function for GLn-invariant differential operators in the skew Capelli identity, arXiv:0803.1339

- ^ Nishiyama, K.; Wachi, A. (2008), A note on the Capelli identities for symmetric pairs of Hermitian type, arXiv:0808.0607

General

- Capelli, Alfredo (1887), "Ueber die Zurückführung der Cayley'schen Operation Ω auf gewöhnliche Polar-Operationen", Mathematische Annalen (Berlin / Heidelberg: Springer) 29 (3): 331–338, doi:10.1007/BF01447728, ISSN 1432-1807

- Howe, Roger (1989), "Remarks on classical invariant theory", Transactions of the American Mathematical Society (American Mathematical Society) 313 (2): 539–570, doi:10.2307/2001418, ISSN 0002-9947, JSTOR 2001418, MR0986027

- Howe, Roger; Umeda, Toru (1991), "The Capelli identity, the double commutant theorem, and multiplicity-free actions", Mathematische Annalen 290 (1): 565–619, doi:10.1007/BF01459261

- Umeda, Tôru (1998), "The Capelli identities, a century after", Selected papers on harmonic analysis, groups, and invariants, Amer. Math. Soc. Transl. Ser. 2, 183, Providence, R.I.: Amer. Math. Soc., pp. 51–78, ISBN 978-0-8218-0840-5, MR1615137, http://books.google.com/books?isbn=0821808400

- Weyl, Hermann (1946), The Classical Groups: Their Invariants and Representations, Princeton University Press, ISBN 978-0-691-05756-9, MR0000255, http://books.google.com/?id=zmzKSP2xTtYC, retrieved 03/2007/26

![[ E_{ij}, E_{kl}] = \delta_{jk}E_{il}- \delta_{il}E_{kj}.~~~~~~~~~](/2012-wikipedia_en_all_nopic_01_2012/I/cef15e0a4fcb50d16eb35c991ad130e4.png)

![\det(t%2BE%2B(n-i)\delta_{ij}) = t^{[n]}%2B \mathrm{Tr}(E)t^{[n-1]}, ~~~~](/2012-wikipedia_en_all_nopic_01_2012/I/210bc1ac2ff99abeb64cf24453d96237.png)

![\begin{align}

& \begin{vmatrix} t%2B E_{11}%2B1 & E_{12} \\

E_{21} & t%2B E_{22}

\end{vmatrix}

=\begin{vmatrix} t%2B x_1 \partial_1%2B1 & x_1 \partial_2 \\

x_2 \partial_1 & t%2B x_2 \partial_2

\end{vmatrix} \\[8pt]

& = (t%2B x_1 \partial_1%2B1 ) ( t%2B x_2 \partial_2)- x_2 \partial_1 x_1 \partial_2 \\[6pt]

& = t(t%2B1)%2B t( x_1 \partial_1 %2B x_2 \partial_2)

%2Bx_1 \partial_1 x_2 \partial_2%2Bx_2 \partial_2 -

x_2 \partial_1 x_1 \partial_2

\end{align}](/2012-wikipedia_en_all_nopic_01_2012/I/96964c3451547dc28466f2a14edaa9df.png)

![\begin{align}

& {} \quad t(t%2B1)%2B t( x_1 \partial_1 %2B x_2 \partial_2)

%2Bx_2 x_1 \partial_1 \partial_2%2Bx_2 \partial_2 -

x_2 x_1 \partial_1 \partial_2 - x_2 \partial_2 \\[8pt]

& = t(t%2B1)%2B t( x_1 \partial_1 %2B x_2 \partial_2)=t^{[2]}%2B t\,\mathrm{Tr}(E).

\end{align}](/2012-wikipedia_en_all_nopic_01_2012/I/40b225a4c7387fe34165ac7ec2b87e10.png)

![\det(t%2BE%2B(n-i)\delta_{ij}) =

t^{[n]}%2B\sum_{k=n-1,\dots,0} t^{[k]} C_k, ~~~~~](/2012-wikipedia_en_all_nopic_01_2012/I/a077c537de7eda6f3b2a59481cfae3c0.png)

![[E_{12}, E_{11}E_{22}-E_{21}E_{12}%2BE_{22}]](/2012-wikipedia_en_all_nopic_01_2012/I/2090fb5a85d8afe0df7d5bf15b49fcc1.png)

![=[E_{12}, E_{11}] E_{22} %2B E_{11} [E_{12}, E_{22}] -

[E_{12}, E_{21}] E_{12} - E_{21}[E_{12},E_{12}] %2B[E_{12},E_{22}]](/2012-wikipedia_en_all_nopic_01_2012/I/212ccd1f2f7440613be8100932e16561.png)

![\det(t%2BE%2B(n-i)\delta_{ij}) = t^{[n]}%2B\sum_{k=n-1,\dots,0}t^{[k]} \sum_{I,J} \det(X_{IJ}) \det(D^t_{JI}),](/2012-wikipedia_en_all_nopic_01_2012/I/1f4920091024a8e036610fa5e7ffc79a.png)

![\mathbb{C} [x_{ij}] = S(\mathbb{C}^n \otimes \mathbb{C}^m) = \sum_D \rho_n^D \otimes\rho_m^{D'}.](/2012-wikipedia_en_all_nopic_01_2012/I/6df83242ef1e9ca1ec3817ecebfa79d9.png)

![X=\begin{vmatrix}

x_{11} & x_{12} & x_{13} &\cdots & x_{1n} \\

x_{12} & x_{22} & x_{23} &\cdots & x_{2n} \\

x_{13} & x_{23} & x_{33} &\cdots & x_{3n} \\

\vdots& \vdots & \vdots &\ddots & \vdots \\

x_{1n} & x_{2n} & x_{3n} &\cdots & x_{nn}

\end{vmatrix},

D=\begin{vmatrix}

\frac{\partial} { \partial x_{11} } & \frac{\partial} {\partial x_{12}} & \frac{\partial} { \partial x_{13}} &\cdots & \frac{\partial}{\partial x_{1n} } \\[6pt]

\frac{\partial} {\partial x_{12} } & \frac{\partial} {\partial x_{22}} & \frac{\partial} { \partial x_{23}} &\cdots & \frac{\partial}{\partial x_{2n} } \\[6pt]

\frac{\partial} {\partial x_{13} } & \frac{\partial} {\partial x_{23}} & \frac{\partial} { \partial x_{33}} &\cdots & \frac{\partial}{\partial x_{3n} } \\[6pt]

\vdots& \vdots & \vdots &\ddots & \vdots \\

\frac{\partial} {\partial x_{1n} } & \frac{\partial} {\partial x_{2n}} & \frac{\partial} { \partial x_{3n}} &\cdots & \frac{\partial}{\partial x_{nn} }

\end{vmatrix}](/2012-wikipedia_en_all_nopic_01_2012/I/84744ba8c2dd992453e9b84316f5b37a.png)

![X=\begin{vmatrix}

0 & x_{12} & x_{13} &\cdots & x_{1n} \\

-x_{12} & 0 & x_{23} &\cdots & x_{2n} \\

-x_{13} & -x_{23} & 0 &\cdots & x_{3n} \\

\vdots& \vdots & \vdots &\ddots & \vdots \\

-x_{1n} & -x_{2n} & -x_{3n} &\cdots & 0

\end{vmatrix},

D=\begin{vmatrix}

0 & \frac{\partial} {\partial x_{12}} & \frac{\partial} {\partial x_{13}} &\cdots & \frac{\partial}{\partial x_{1n} } \\[6pt]

-\frac{\partial} { \partial x_{12} } & 0 & \frac{\partial} { \partial x_{23}} &\cdots & \frac{\partial}{\partial x_{2n} } \\[6pt]

-\frac{\partial} {\partial x_{13} } & -\frac{\partial} {\partial x_{23}} & 0 &\cdots & \frac{\partial}{\partial x_{3n} } \\[6pt]

\vdots& \vdots & \vdots &\ddots & \vdots \\[6pt]

-\frac{\partial} {\partial x_{1n} } & -\frac{\partial} {\partial x_{2n}} & -\frac{\partial} {\partial x_{3n}} &\cdots & 0

\end{vmatrix}](/2012-wikipedia_en_all_nopic_01_2012/I/7593f3c68c781c61a10921bdbf9e1fae.png)

![[M_{ij}, Y_{kl}]= -\delta_{jk} Q_{il} ~~~~~](/2012-wikipedia_en_all_nopic_01_2012/I/9babe423117493be54980e256b0e8f30.png)

![[ H_i(z), H_j(w) ]= 0, ~~~~~~~~](/2012-wikipedia_en_all_nopic_01_2012/I/62f4c995cf505554af493d01d1910be0.png)